Introduction to VPC Elastic Network Interfaces is an elastic network interface (ENI) allows an instance to communicate with other network resources including AWS services, other instances, on-premises servers, and the Internet. It also makes it possible for you to connect to the operating system running on your instance to manage it. As the name suggests, an ENI performs the same basic function as a network interface on a physical server, although ENIs have more restrictions on how you can configure them. Every instance must have a primary network interface (also known as the primary ENI) , which is connected to only one subnet. This is the reason you have to specify a subnet when launching an instance. You can’t remove the primary ENI from an instance.

Related Products:– AWS Certified Solutions Architect | Associate

Primary and Secondary Private IP Addresses

Each instance must have a primary private IP address from the range specified by the subnet CIDR. The primary private IP address is bound to the primary ENI of the instance. You can’t change or remove this address, but you can assign secondary private IP addresses to the primary ENI. Any secondary addresses must come from the same subnet that the ENI is attached to. It’s possible to attach additional ENIs to an instance. Those ENIs may be in a different subnet, but they must be in the same availability zone as the instance. As always, any addresses associated with the ENI must come from the subnet to which it is attached.

Attaching Elastic Network Interfaces

An ENI can exist independently of an instance. You can create an ENI first and then attach it to an instance later. For example, you can create an ENI in one subnet and then attach it to an instance as the primary ENI when you launch the instance. If you disable the Delete on Termination attribute of the ENI, you can terminate the instance without deleting the ENI. You can then associate the ENI with another instance. You can also take an existing ENI and attach it to an existing instance as a secondary ENI. This lets you redirect traffic from a failed instance to a working instance by detaching the ENI from the failed instance and reattaching it to the working instance. Complete Exercise 4.3 to practice creating an ENI and attaching it to an instance.

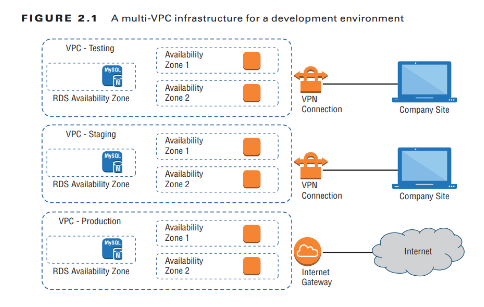

Internet Gateways

An Internet gateway gives instances the ability to receive a public IP address, connect to the Internet, and receive requests from the Internet. When you create a VPC, it does not have an Internet gateway associated with it. You must create an Internet gateway and associate it with a VPC manually. You can associate only one Internet gateway with a VPC. But you may create multiple Internet gateways and associate each one with a different VPC. An Internet gateway is somewhat analogous to an Internet router an Internet service provider may install on-premises. But in AWS, an Internet gateway doesn’t behave exactly like a router. In a traditional network, you might configure your core router with a default gateway IP address pointing to the Internet router to give your server’s access to the Internet. An Internet gateway, however, doesn’t have a management IP address or network interface. Instead, AWS identifies an Internet gateway by its resource ID, which begins with igw- followed by an alphanumeric string. To use an Internet gateway, you must create a default route in a route table that points to the Internet gateway as a target.

Route Tables

Configurable virtual routers do not exist as VPC resources. Instead, the VPC infrastructure implements IP routing as a software function and AWS calls this function an implied router (also sometimes called an implicit router). This means there’s no virtual router on which to configure interface IP addresses or dynamic routing protocols. Rather, you only have to manage the route table which the implied router uses. Each route table consists of one or more routes and at least one subnet association. Think of a route table as being connected to multiple subnets in much the same way a traditional router would be. When you create a VPC, AWS automatically creates a default route table called the main route table and associates it with every subnet in that VPC. You can use the main route table or create a custom one that you can manually associate with one or more subnets. If you do not explicitly associate a subnet with a route table you’ve created, AWS will implicitly associate it with the main route table. A subnet cannot exist without a route table association.

Routes

Routes determine how to forward traffic from instances within the subnets associated with the route table. IP routing is destination-based, meaning that routing decisions are based only on the destination IP address, not the source. When you create a route, you must provide the following elements:

- Destination

- Target

The destination must be an IP prefix in CIDR notation. The target must be an AWS network resource such as an Internet gateway or an ENI. It cannot be a CIDR. Every route table contains a local route that allows instances in different subnets to communicate with each other. Table 4.2 shows what this route would look like in a VPC with the CIDR 172.31.0.0/16.

The Default Route

The local route is the only mandatory route that exists in every route table. It’s what allows communication between instances in the same VPC. Because there are no routes for any other IP prefixes, any traffic destined for an address outside of the VPC CIDR range will get dropped.To enable Internet access for your instances, you must create a default route pointing to the Internet gateway. After adding a default route, you would end up with this:

The 0.0.0.0/0 prefix encompasses all IP addresses, including those of hosts on the Internet. This is why it’s always listed as the destination in a default route. Any subnet that is associated with a route table containing a default route pointing to an Internet gateway is called a public subnet. Contrast this with a private subnet that does not have a default route. Notice that the 0.0.0.0/0 and 172.31.0.0/16 prefixes overlap. When deciding where to route traffic, the implied router will route based on the closest match. Suppose an instance sends a packet to the Internet address 198.51.100.50. Because 198.51.100.50 does not match the 172.31.0.0/16 prefix but does match the 0.0.0.0/0 prefix, the implied router will use the default route and send the packet to the Internet gateway. AWS documentation speaks of one implied router per VPC. It’s important to understand that the implied router doesn’t actually exist as a discrete resource. It’s an abstraction of an IP routing function. Nevertheless, you may find it helpful to think of each route table as a separate implied router. Follow the steps in Exercise 4.4 to create an Internet gateway and a default route.

Security Groups

A security group functions as a firewall that controls traffic to and from an instance by permitting traffic to ingress or egress that instance’s ENI. Every ENI must have at least one security group associated with it. One ENI can have multiple security groups attached, and the same security group can be attached to multiple ENIs. In practice, because most instances have only one ENI, people often think of a security group as being attached to an instance. When an instance has multiple ENIs, take care to note whether those ENIs use different security groups. When you create a security group, you must specify a group name, description, and VPC for the group to reside in. Once you create the group, you specify inbound and outbound rules to allow traffic through the security group.

Also Read:– Introduction to Amazon Glacier Service

Inbound Rules

Inbound rules specify what traffic is allowed into the attached ENI. An inbound rule consists of three required elements:

- Source

- Protocol

- Port range

When you create a security group, it doesn’t contain any inbound rules. Security groups use a default-deny approach, also called whitelisting, which denies all traffic that is not explicitly allowed by a rule. When you create a new security group and attach it to an instance, all inbound traffic to that instance will be blocked. You must create inbound rules to allow traffic to your instance. For this reason, the order of rules in a security group doesn’t matter. Suppose you have an instance running an HTTPS-based web application. You want to allow anyone on the Internet to connect to this instance, so you’d need an inbound rule to allow all TCP traffic coming in on port 443 (the default port and protocol for HTTPS). To manage this instance using SSH, you’d need another inbound rule for TCP port 22. However, you don’t want to allow SSH access from just anyone. You need to allow SSH access only from the IP address 198.51.100.10. To achieve this, you would use a security group containing the inbound rules listed in Table 4.3.

The prefix 0.0.0.0/0 covers all valid IP addresses, so using the preceding rule would allow HTTPS access not only from the Internet but from all instances in the VPC as well.

Read More : https://www.info-savvy.com/introduction-to-vpc-elastic-network-interfaces/

————————————————————————————————————

This Blog Article is posted by

Infosavvy, 2nd Floor, Sai Niketan, Chandavalkar Road Opp. Gora Gandhi Hotel, Above Jumbo King, beside Speakwell Institute, Borivali West, Mumbai, Maharashtra 400092

Contact us – www.info-savvy.com